View Jupyter notebook on the GitHub.

Deep learning examples#

This notebooks contains examples with neural network models.

Table of contents

Loading dataset

Architecture

Testing models

Baseline

DeepAR

DeepARNative

TFT

TFTNative

RNN

MLP

Deep State Model

N-BEATS Model

PatchTS Model

Chronos Model

Chronos Bolt Model

TimesFM Model

[1]:

!pip install "etna[torch,chronos,timesfm]" -q

[2]:

import warnings

warnings.filterwarnings("ignore")

[3]:

import random

import numpy as np

import pandas as pd

import torch

from etna.analysis import plot_backtest

from etna.datasets.tsdataset import TSDataset

from etna.metrics import MAE

from etna.metrics import MAPE

from etna.metrics import SMAPE

from etna.models import SeasonalMovingAverageModel

from etna.pipeline import Pipeline

from etna.transforms import DateFlagsTransform

from etna.transforms import LabelEncoderTransform

from etna.transforms import LagTransform

from etna.transforms import LinearTrendTransform

from etna.transforms import SegmentEncoderTransform

from etna.transforms import StandardScalerTransform

[4]:

def set_seed(seed: int = 42):

"""Set random seed for reproducibility."""

random.seed(seed)

np.random.seed(seed)

torch.manual_seed(seed)

torch.cuda.manual_seed_all(seed)

1. Loading dataset#

We are going to take some toy dataset. Let’s load and look at it.

[5]:

df = pd.read_csv("data/example_dataset.csv")

df.head()

[5]:

| timestamp | segment | target | |

|---|---|---|---|

| 0 | 2019-01-01 | segment_a | 170 |

| 1 | 2019-01-02 | segment_a | 243 |

| 2 | 2019-01-03 | segment_a | 267 |

| 3 | 2019-01-04 | segment_a | 287 |

| 4 | 2019-01-05 | segment_a | 279 |

Our library works with the special data structure TSDataset. Let’s create it as it was done in “Get started” notebook.

[6]:

ts = TSDataset(df, freq="D")

ts.head(5)

[6]:

| segment | segment_a | segment_b | segment_c | segment_d |

|---|---|---|---|---|

| feature | target | target | target | target |

| timestamp | ||||

| 2019-01-01 | 170 | 102 | 92 | 238 |

| 2019-01-02 | 243 | 123 | 107 | 358 |

| 2019-01-03 | 267 | 130 | 103 | 366 |

| 2019-01-04 | 287 | 138 | 103 | 385 |

| 2019-01-05 | 279 | 137 | 104 | 384 |

2. Architecture#

Our library has two types of models:

Models from PyTorch Forecasting

Native models.

First, let’s describe the pytorch-forecasting models, because they require a special handling. There are two ways to use these models: default one and via using PytorchForecastingDatasetBuilder for using extra features.

To include extra features we use PytorchForecastingDatasetBuilder class.

Let’s look at it closer.

[7]:

from etna.models.nn.utils import PytorchForecastingDatasetBuilder

[8]:

?PytorchForecastingDatasetBuilder

We can see a pretty scary signature, but don’t panic, we will look at the most important parameters.

time_varying_known_reals— known real values that change across the time (real regressors), now it it necessary to add “time_idx” variable to the list;time_varying_unknown_reals— our real value target, set it to["target"];max_prediction_length— our horizon for forecasting;max_encoder_length— length of past context to use;static_categoricals— static categorical values, for example, if we use multiple segments it can be some its characteristics including identifier: “segment”;time_varying_known_categoricals— known categorical values that change across the time (categorical regressors);target_normalizer— class for normalization targets across different segments.

Our library currently supports these pytorch-forecasting models:

As for the native neural network models, they are simpler to use, because they don’t require PytorchForecastingTransform. We will see how to use them on examples.

3. Testing models#

In this section we will test our models on example.

[9]:

HORIZON = 7

metrics = [SMAPE(), MAPE(), MAE()]

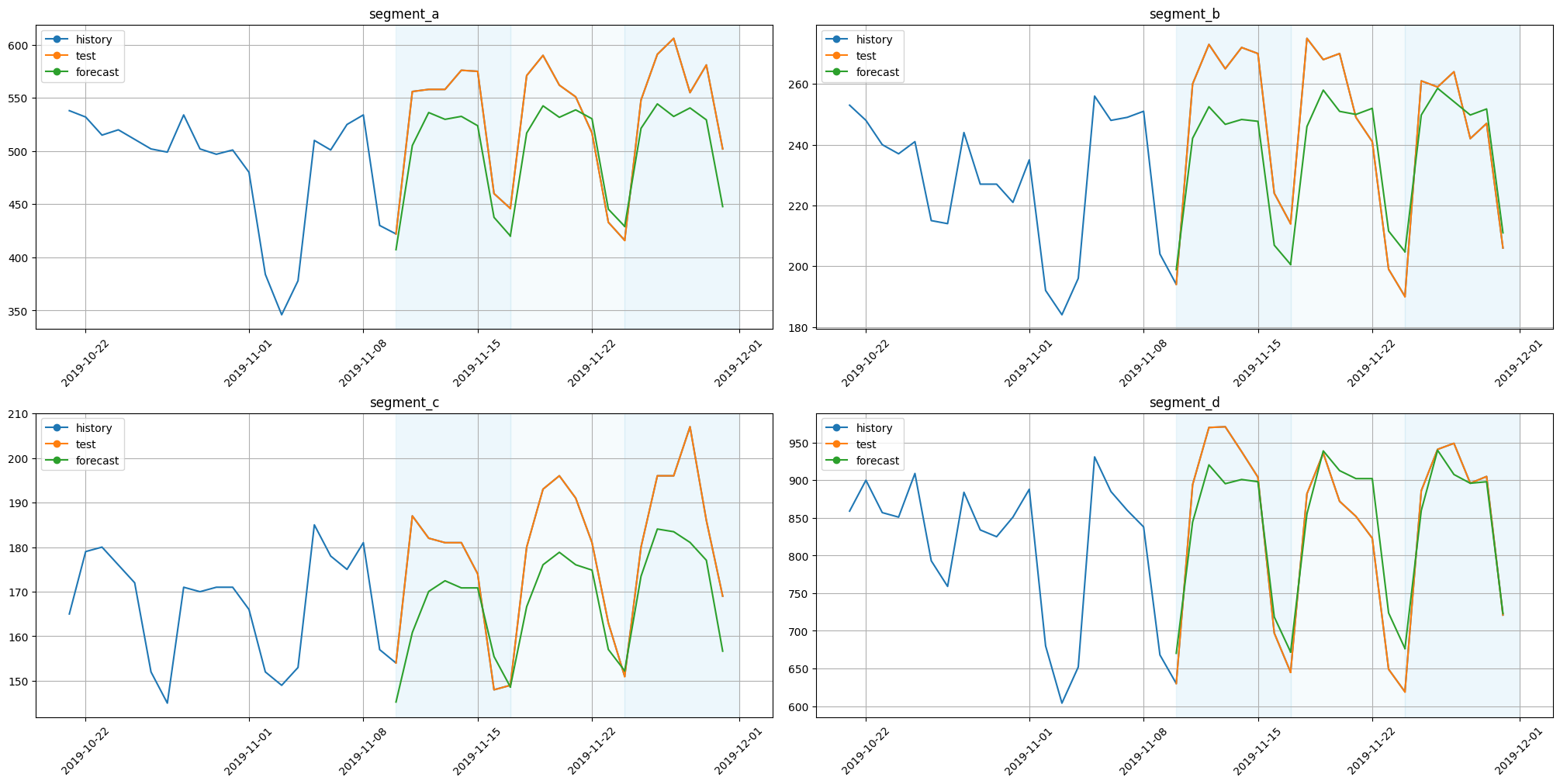

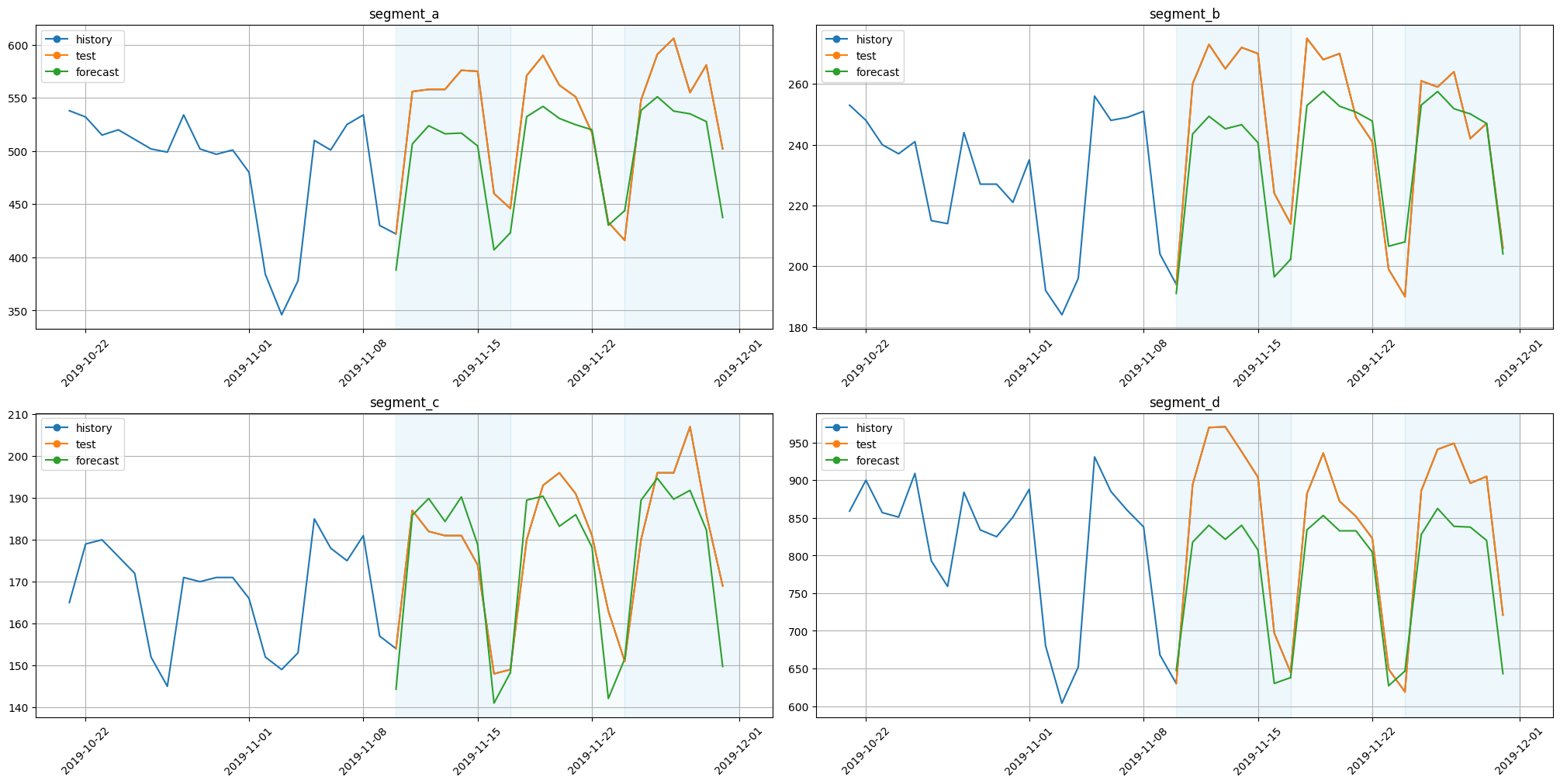

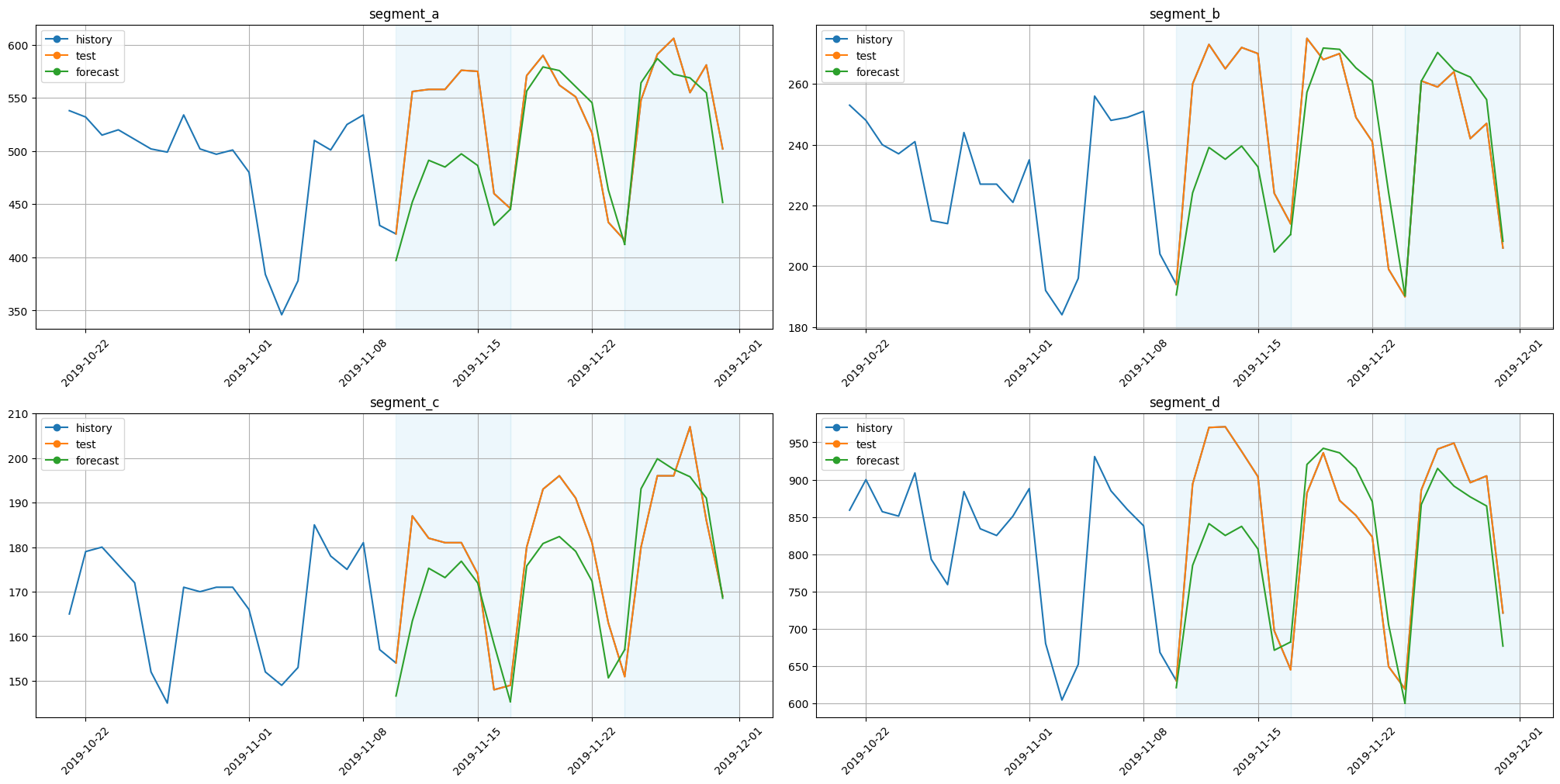

3.1 Baseline#

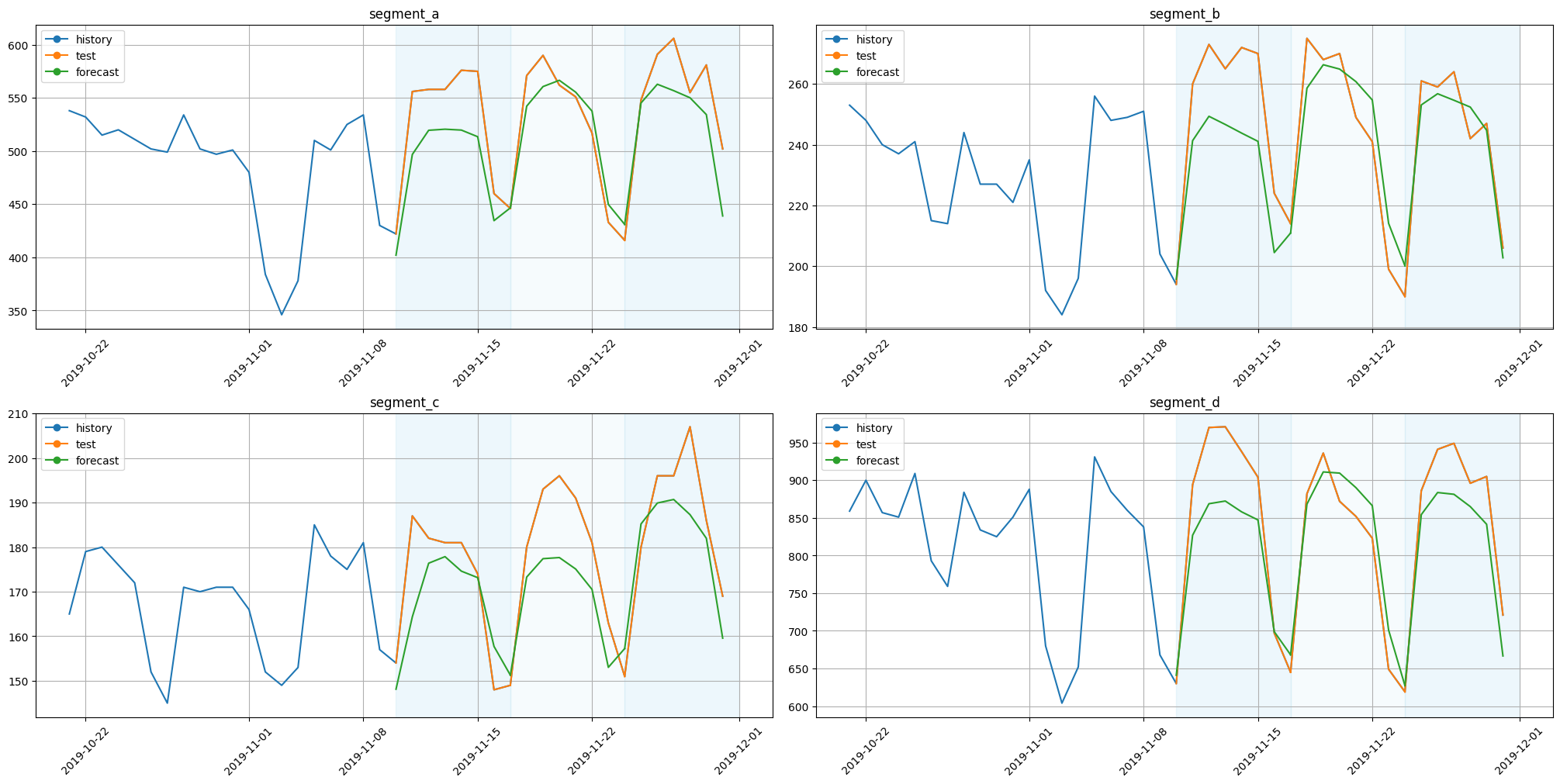

For comparison let’s train some simple model as a baseline.

[10]:

model_sma = SeasonalMovingAverageModel(window=5, seasonality=7)

linear_trend_transform = LinearTrendTransform(in_column="target")

pipeline_sma = Pipeline(model=model_sma, horizon=HORIZON, transforms=[linear_trend_transform])

[11]:

metrics_sma, forecast_sma, fold_info_sma = pipeline_sma.backtest(ts, metrics=metrics, n_folds=3, n_jobs=1)

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s finished

[12]:

metrics_sma

[12]:

| segment | SMAPE | MAPE | MAE | fold_number | |

|---|---|---|---|---|---|

| 0 | segment_a | 6.343943 | 6.124296 | 33.196532 | 0 |

| 0 | segment_a | 5.346946 | 5.192455 | 27.938101 | 1 |

| 0 | segment_a | 7.510347 | 7.189999 | 40.028565 | 2 |

| 1 | segment_b | 7.178822 | 6.920176 | 17.818102 | 0 |

| 1 | segment_b | 5.672504 | 5.554555 | 13.719200 | 1 |

| 1 | segment_b | 3.327846 | 3.359712 | 7.680919 | 2 |

| 2 | segment_c | 6.430429 | 6.200580 | 10.877718 | 0 |

| 2 | segment_c | 5.947090 | 5.727531 | 10.701336 | 1 |

| 2 | segment_c | 6.186545 | 5.943679 | 11.359563 | 2 |

| 3 | segment_d | 4.707899 | 4.644170 | 39.918646 | 0 |

| 3 | segment_d | 5.403426 | 5.600978 | 43.047332 | 1 |

| 3 | segment_d | 2.505279 | 2.543719 | 19.347565 | 2 |

[13]:

score = metrics_sma["SMAPE"].mean()

print(f"Average SMAPE for Seasonal MA: {score:.3f}")

Average SMAPE for Seasonal MA: 5.547

[14]:

plot_backtest(forecast_sma, ts, history_len=20)

3.2 DeepAR#

[15]:

from etna.models.nn import DeepARModel

Before training let’s fix seeds for reproducibility.

[16]:

set_seed()

Default way#

[17]:

model_deepar = DeepARModel(

encoder_length=HORIZON,

decoder_length=HORIZON,

trainer_params=dict(max_epochs=20, gradient_clip_val=0.1),

lr=0.01,

train_batch_size=16,

)

metrics = [SMAPE(), MAPE(), MAE()]

pipeline_deepar = Pipeline(model=model_deepar, horizon=HORIZON)

[18]:

metrics_deepar, forecast_deepar, fold_info_deepar = pipeline_deepar.backtest(ts, metrics=metrics, n_folds=3, n_jobs=1)

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

------------------------------------------------------------------

0 | loss | NormalDistributionLoss | 0

1 | logging_metrics | ModuleList | 0

2 | embeddings | MultiEmbedding | 0

3 | rnn | LSTM | 1.6 K

4 | distribution_projector | Linear | 22

------------------------------------------------------------------

1.6 K Trainable params

0 Non-trainable params

1.6 K Total params

0.006 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=20` reached.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 18.2s remaining: 0.0s

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

------------------------------------------------------------------

0 | loss | NormalDistributionLoss | 0

1 | logging_metrics | ModuleList | 0

2 | embeddings | MultiEmbedding | 0

3 | rnn | LSTM | 1.6 K

4 | distribution_projector | Linear | 22

------------------------------------------------------------------

1.6 K Trainable params

0 Non-trainable params

1.6 K Total params

0.006 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=20` reached.

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 35.7s remaining: 0.0s

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

------------------------------------------------------------------

0 | loss | NormalDistributionLoss | 0

1 | logging_metrics | ModuleList | 0

2 | embeddings | MultiEmbedding | 0

3 | rnn | LSTM | 1.6 K

4 | distribution_projector | Linear | 22

------------------------------------------------------------------

1.6 K Trainable params

0 Non-trainable params

1.6 K Total params

0.006 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=20` reached.

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 53.8s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 53.8s finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.2s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.2s finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s finished

[19]:

metrics_deepar

[19]:

| segment | SMAPE | MAPE | MAE | fold_number | |

|---|---|---|---|---|---|

| 0 | segment_a | 11.991789 | 11.136389 | 60.220598 | 0 |

| 0 | segment_a | 3.738700 | 3.802559 | 19.429980 | 1 |

| 0 | segment_a | 9.372195 | 9.127999 | 47.781682 | 2 |

| 1 | segment_b | 8.085439 | 7.699522 | 20.147598 | 0 |

| 1 | segment_b | 4.951007 | 4.924278 | 11.986952 | 1 |

| 1 | segment_b | 5.498259 | 5.821214 | 12.443898 | 2 |

| 2 | segment_c | 5.561446 | 5.633262 | 9.442320 | 0 |

| 2 | segment_c | 7.060725 | 6.841531 | 12.387765 | 1 |

| 2 | segment_c | 5.387418 | 5.496499 | 9.709654 | 2 |

| 3 | segment_d | 6.425422 | 6.287132 | 55.566659 | 0 |

| 3 | segment_d | 3.536992 | 3.587428 | 27.874826 | 1 |

| 3 | segment_d | 6.445370 | 6.539983 | 50.958487 | 2 |

To summarize it we will take mean value of SMAPE metric because it is scale tolerant.

[20]:

score = metrics_deepar["SMAPE"].mean()

print(f"Average SMAPE for DeepAR: {score:.3f}")

Average SMAPE for DeepAR: 6.505

Dataset Builder: creating dataset for DeepAR with etxtra features.#

[21]:

from pytorch_forecasting.data import GroupNormalizer

num_lags = 10

transform_date = DateFlagsTransform(

day_number_in_week=True,

day_number_in_month=False,

day_number_in_year=False,

week_number_in_month=False,

week_number_in_year=False,

month_number_in_year=False,

season_number=False,

year_number=False,

is_weekend=False,

out_column="dateflag",

)

transform_lag = LagTransform(

in_column="target",

lags=[HORIZON + i for i in range(num_lags)],

out_column="target_lag",

)

lag_columns = [f"target_lag_{HORIZON+i}" for i in range(num_lags)]

dataset_builder_deepar = PytorchForecastingDatasetBuilder(

max_encoder_length=HORIZON,

max_prediction_length=HORIZON,

time_varying_known_reals=["time_idx"] + lag_columns,

time_varying_unknown_reals=["target"],

time_varying_known_categoricals=["dateflag_day_number_in_week"],

target_normalizer=GroupNormalizer(groups=["segment"]),

)

Now we are going to start backtest.

[22]:

set_seed()

model_deepar = DeepARModel(

dataset_builder=dataset_builder_deepar,

trainer_params=dict(max_epochs=20, gradient_clip_val=0.1),

lr=0.01,

train_batch_size=16,

)

pipeline_deepar = Pipeline(

model=model_deepar,

horizon=HORIZON,

transforms=[transform_lag, transform_date],

)

[23]:

metrics_deepar, forecast_deepar, fold_info_deepar = pipeline_deepar.backtest(ts, metrics=metrics, n_folds=3, n_jobs=1)

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

------------------------------------------------------------------

0 | loss | NormalDistributionLoss | 0

1 | logging_metrics | ModuleList | 0

2 | embeddings | MultiEmbedding | 35

3 | rnn | LSTM | 2.2 K

4 | distribution_projector | Linear | 22

------------------------------------------------------------------

2.3 K Trainable params

0 Non-trainable params

2.3 K Total params

0.009 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=20` reached.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 17.5s remaining: 0.0s

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

------------------------------------------------------------------

0 | loss | NormalDistributionLoss | 0

1 | logging_metrics | ModuleList | 0

2 | embeddings | MultiEmbedding | 35

3 | rnn | LSTM | 2.2 K

4 | distribution_projector | Linear | 22

------------------------------------------------------------------

2.3 K Trainable params

0 Non-trainable params

2.3 K Total params

0.009 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=20` reached.

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 35.1s remaining: 0.0s

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

------------------------------------------------------------------

0 | loss | NormalDistributionLoss | 0

1 | logging_metrics | ModuleList | 0

2 | embeddings | MultiEmbedding | 35

3 | rnn | LSTM | 2.2 K

4 | distribution_projector | Linear | 22

------------------------------------------------------------------

2.3 K Trainable params

0 Non-trainable params

2.3 K Total params

0.009 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=20` reached.

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 53.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 53.1s finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.2s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.2s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.2s finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s finished

Let’s compare results across different segments.

[24]:

metrics_deepar

[24]:

| segment | SMAPE | MAPE | MAE | fold_number | |

|---|---|---|---|---|---|

| 0 | segment_a | 6.805594 | 6.531085 | 35.181100 | 0 |

| 0 | segment_a | 3.220954 | 3.193369 | 16.990387 | 1 |

| 0 | segment_a | 6.250622 | 5.970213 | 33.195587 | 2 |

| 1 | segment_b | 7.510020 | 7.240360 | 18.528828 | 0 |

| 1 | segment_b | 3.394223 | 3.402076 | 8.272498 | 1 |

| 1 | segment_b | 2.550600 | 2.556611 | 6.122029 | 2 |

| 2 | segment_c | 2.711825 | 2.738573 | 4.532955 | 0 |

| 2 | segment_c | 4.593296 | 4.459803 | 8.320400 | 1 |

| 2 | segment_c | 4.944589 | 4.842140 | 9.074217 | 2 |

| 3 | segment_d | 5.825108 | 5.610574 | 51.673619 | 0 |

| 3 | segment_d | 4.951743 | 5.077607 | 39.208165 | 1 |

| 3 | segment_d | 5.460451 | 5.284515 | 46.397409 | 2 |

To summarize it we will take mean value of SMAPE metric because it is scale tolerant.

[25]:

score = metrics_deepar["SMAPE"].mean()

print(f"Average SMAPE for DeepAR: {score:.3f}")

Average SMAPE for DeepAR: 4.852

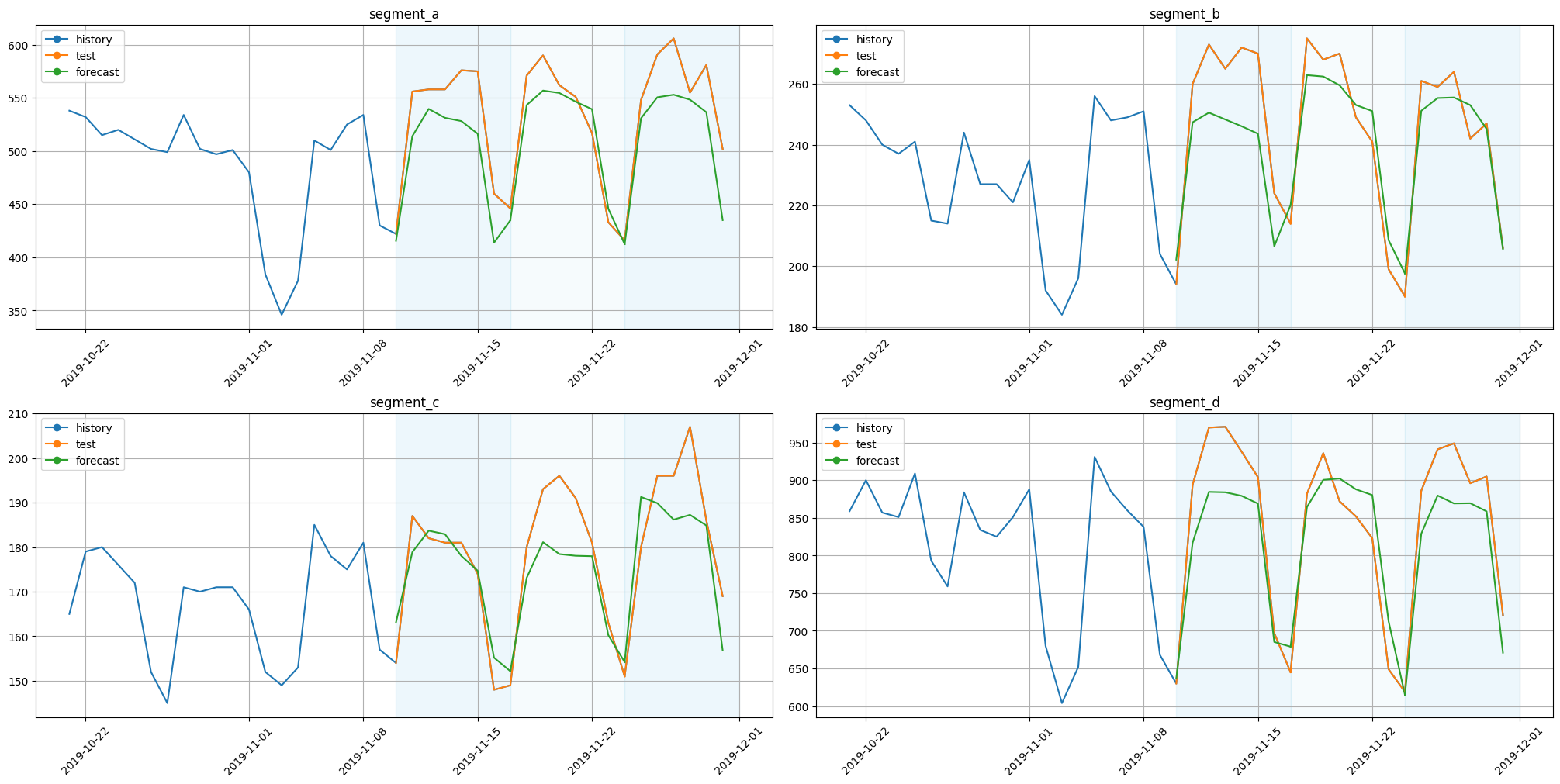

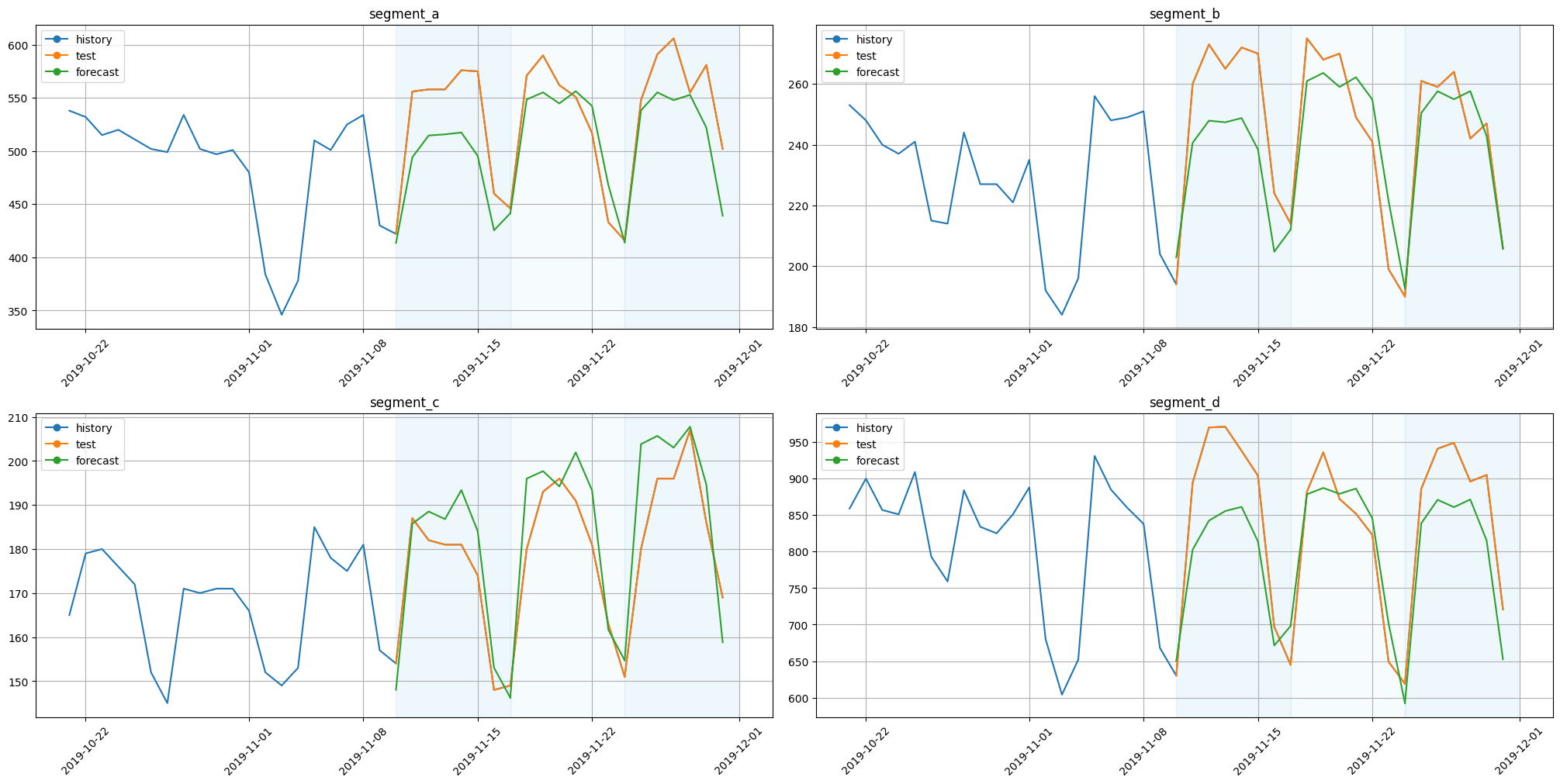

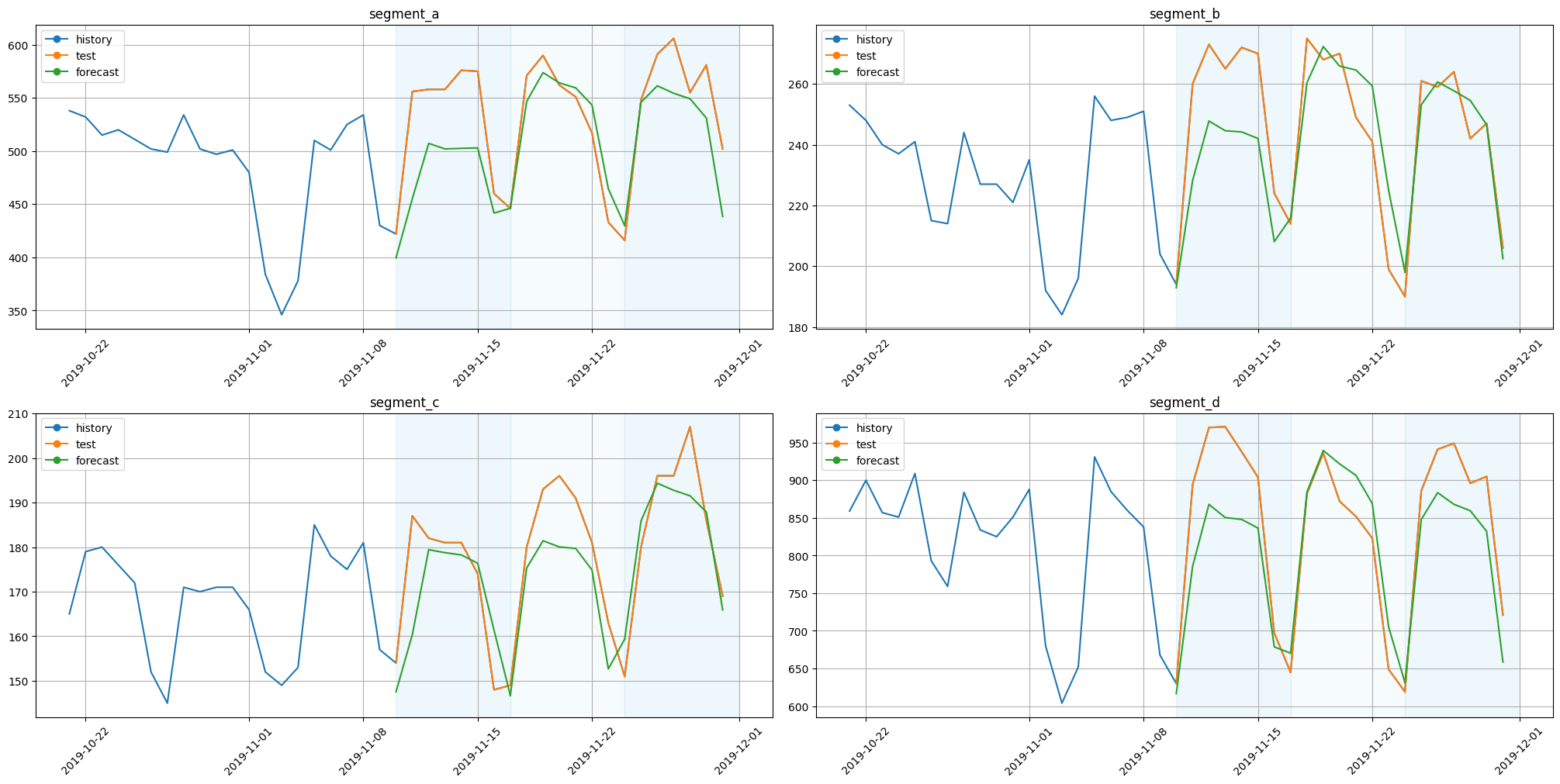

Visualize results.

[26]:

plot_backtest(forecast_deepar, ts, history_len=20)

3.3 DeepARNative#

It is recommended to use our native implementation of DeepAR, we will remove Pytorch Forecasting version in etna 3.0.0.

[27]:

from etna.models.nn import DeepARNativeModel

[28]:

num_lags = 7

scaler = StandardScalerTransform(in_column="target")

transform_date = DateFlagsTransform(

day_number_in_week=True,

day_number_in_month=False,

day_number_in_year=False,

week_number_in_month=False,

week_number_in_year=False,

month_number_in_year=False,

season_number=False,

year_number=False,

is_weekend=False,

out_column="dateflag",

)

transform_lag = LagTransform(

in_column="target",

lags=[HORIZON + i for i in range(num_lags)],

out_column="target_lag",

)

label_encoder = LabelEncoderTransform(

in_column="dateflag_day_number_in_week", strategy="none", out_column="dateflag_day_number_in_week_label"

)

embedding_sizes = {"dateflag_day_number_in_week_label": (7, 7)}

[29]:

set_seed()

model_deepar_native = DeepARNativeModel(

input_size=num_lags + 1,

encoder_length=2 * HORIZON,

decoder_length=HORIZON,

embedding_sizes=embedding_sizes,

lr=0.01,

scale=False,

n_samples=100,

trainer_params=dict(max_epochs=2),

)

pipeline_deepar_native = Pipeline(

model=model_deepar_native,

horizon=HORIZON,

transforms=[scaler, transform_lag, transform_date, label_encoder],

)

[30]:

metrics_deepar_native, forecast_deepar_native, fold_info_deepar_native = pipeline_deepar_native.backtest(

ts, metrics=metrics, n_folds=3, n_jobs=1

)

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

----------------------------------------------

0 | loss | GaussianLoss | 0

1 | embedding | MultiEmbedding | 56

2 | rnn | LSTM | 4.3 K

3 | projection | ModuleDict | 34

----------------------------------------------

4.4 K Trainable params

0 Non-trainable params

4.4 K Total params

0.018 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=2` reached.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 1.1s remaining: 0.0s

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

----------------------------------------------

0 | loss | GaussianLoss | 0

1 | embedding | MultiEmbedding | 56

2 | rnn | LSTM | 4.3 K

3 | projection | ModuleDict | 34

----------------------------------------------

4.4 K Trainable params

0 Non-trainable params

4.4 K Total params

0.018 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=2` reached.

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 2.2s remaining: 0.0s

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

----------------------------------------------

0 | loss | GaussianLoss | 0

1 | embedding | MultiEmbedding | 56

2 | rnn | LSTM | 4.3 K

3 | projection | ModuleDict | 34

----------------------------------------------

4.4 K Trainable params

0 Non-trainable params

4.4 K Total params

0.018 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=2` reached.

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 3.3s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 3.3s finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.2s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.4s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.6s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.6s finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s finished

[31]:

score = metrics_deepar_native["SMAPE"].mean()

print(f"Average SMAPE for DeepARNative: {score:.3f}")

Average SMAPE for DeepARNative: 5.816

[32]:

plot_backtest(forecast_deepar_native, ts, history_len=20)

3.4 TFT#

Let’s move to the next model.

[33]:

from etna.models.nn import TFTModel

[34]:

set_seed()

Default way#

[35]:

model_tft = TFTModel(

encoder_length=2 * HORIZON,

decoder_length=HORIZON,

trainer_params=dict(max_epochs=60, gradient_clip_val=0.1),

lr=0.01,

train_batch_size=32,

)

pipeline_tft = Pipeline(

model=model_tft,

horizon=HORIZON,

)

[36]:

metrics_tft, forecast_tft, fold_info_tft = pipeline_tft.backtest(ts, metrics=metrics, n_folds=3, n_jobs=1)

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

----------------------------------------------------------------------------------------

0 | loss | QuantileLoss | 0

1 | logging_metrics | ModuleList | 0

2 | input_embeddings | MultiEmbedding | 0

3 | prescalers | ModuleDict | 96

4 | static_variable_selection | VariableSelectionNetwork | 1.7 K

5 | encoder_variable_selection | VariableSelectionNetwork | 1.8 K

6 | decoder_variable_selection | VariableSelectionNetwork | 1.2 K

7 | static_context_variable_selection | GatedResidualNetwork | 1.1 K

8 | static_context_initial_hidden_lstm | GatedResidualNetwork | 1.1 K

9 | static_context_initial_cell_lstm | GatedResidualNetwork | 1.1 K

10 | static_context_enrichment | GatedResidualNetwork | 1.1 K

11 | lstm_encoder | LSTM | 2.2 K

12 | lstm_decoder | LSTM | 2.2 K

13 | post_lstm_gate_encoder | GatedLinearUnit | 544

14 | post_lstm_add_norm_encoder | AddNorm | 32

15 | static_enrichment | GatedResidualNetwork | 1.4 K

16 | multihead_attn | InterpretableMultiHeadAttention | 676

17 | post_attn_gate_norm | GateAddNorm | 576

18 | pos_wise_ff | GatedResidualNetwork | 1.1 K

19 | pre_output_gate_norm | GateAddNorm | 576

20 | output_layer | Linear | 119

----------------------------------------------------------------------------------------

18.4 K Trainable params

0 Non-trainable params

18.4 K Total params

0.074 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=60` reached.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 1.2min remaining: 0.0s

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

----------------------------------------------------------------------------------------

0 | loss | QuantileLoss | 0

1 | logging_metrics | ModuleList | 0

2 | input_embeddings | MultiEmbedding | 0

3 | prescalers | ModuleDict | 96

4 | static_variable_selection | VariableSelectionNetwork | 1.7 K

5 | encoder_variable_selection | VariableSelectionNetwork | 1.8 K

6 | decoder_variable_selection | VariableSelectionNetwork | 1.2 K

7 | static_context_variable_selection | GatedResidualNetwork | 1.1 K

8 | static_context_initial_hidden_lstm | GatedResidualNetwork | 1.1 K

9 | static_context_initial_cell_lstm | GatedResidualNetwork | 1.1 K

10 | static_context_enrichment | GatedResidualNetwork | 1.1 K

11 | lstm_encoder | LSTM | 2.2 K

12 | lstm_decoder | LSTM | 2.2 K

13 | post_lstm_gate_encoder | GatedLinearUnit | 544

14 | post_lstm_add_norm_encoder | AddNorm | 32

15 | static_enrichment | GatedResidualNetwork | 1.4 K

16 | multihead_attn | InterpretableMultiHeadAttention | 676

17 | post_attn_gate_norm | GateAddNorm | 576

18 | pos_wise_ff | GatedResidualNetwork | 1.1 K

19 | pre_output_gate_norm | GateAddNorm | 576

20 | output_layer | Linear | 119

----------------------------------------------------------------------------------------

18.4 K Trainable params

0 Non-trainable params

18.4 K Total params

0.074 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=60` reached.

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 2.4min remaining: 0.0s

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

----------------------------------------------------------------------------------------

0 | loss | QuantileLoss | 0

1 | logging_metrics | ModuleList | 0

2 | input_embeddings | MultiEmbedding | 0

3 | prescalers | ModuleDict | 96

4 | static_variable_selection | VariableSelectionNetwork | 1.7 K

5 | encoder_variable_selection | VariableSelectionNetwork | 1.8 K

6 | decoder_variable_selection | VariableSelectionNetwork | 1.2 K

7 | static_context_variable_selection | GatedResidualNetwork | 1.1 K

8 | static_context_initial_hidden_lstm | GatedResidualNetwork | 1.1 K

9 | static_context_initial_cell_lstm | GatedResidualNetwork | 1.1 K

10 | static_context_enrichment | GatedResidualNetwork | 1.1 K

11 | lstm_encoder | LSTM | 2.2 K

12 | lstm_decoder | LSTM | 2.2 K

13 | post_lstm_gate_encoder | GatedLinearUnit | 544

14 | post_lstm_add_norm_encoder | AddNorm | 32

15 | static_enrichment | GatedResidualNetwork | 1.4 K

16 | multihead_attn | InterpretableMultiHeadAttention | 676

17 | post_attn_gate_norm | GateAddNorm | 576

18 | pos_wise_ff | GatedResidualNetwork | 1.1 K

19 | pre_output_gate_norm | GateAddNorm | 576

20 | output_layer | Linear | 119

----------------------------------------------------------------------------------------

18.4 K Trainable params

0 Non-trainable params

18.4 K Total params

0.074 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=60` reached.

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 3.6min remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 3.6min finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s finished

[37]:

metrics_tft

[37]:

| segment | SMAPE | MAPE | MAE | fold_number | |

|---|---|---|---|---|---|

| 0 | segment_a | 47.938348 | 38.337110 | 207.205824 | 0 |

| 0 | segment_a | 13.373072 | 12.681063 | 68.228463 | 1 |

| 0 | segment_a | 31.370020 | 26.633958 | 150.253693 | 2 |

| 1 | segment_b | 25.325660 | 30.129167 | 70.937051 | 0 |

| 1 | segment_b | 10.611735 | 10.544364 | 25.538180 | 1 |

| 1 | segment_b | 21.439563 | 18.929667 | 47.594628 | 2 |

| 2 | segment_c | 60.741901 | 88.137427 | 149.651367 | 0 |

| 2 | segment_c | 10.590052 | 10.181659 | 18.510978 | 1 |

| 2 | segment_c | 18.217563 | 16.497026 | 31.354285 | 2 |

| 3 | segment_d | 89.380608 | 61.471369 | 535.634404 | 0 |

| 3 | segment_d | 36.360018 | 30.180033 | 254.863220 | 1 |

| 3 | segment_d | 71.920184 | 52.521257 | 452.829930 | 2 |

[38]:

score = metrics_tft["SMAPE"].mean()

print(f"Average SMAPE for TFT: {score:.3f}")

Average SMAPE for TFT: 36.439

Dataset Builder#

[39]:

set_seed()

num_lags = 10

lag_columns = [f"target_lag_{HORIZON+i}" for i in range(num_lags)]

transform_date = DateFlagsTransform(day_number_in_week=True, day_number_in_month=False, out_column="dateflag")

transform_lag = LagTransform(

in_column="target",

lags=[HORIZON + i for i in range(num_lags)],

out_column="target_lag",

)

dataset_builder_tft = PytorchForecastingDatasetBuilder(

max_encoder_length=HORIZON,

max_prediction_length=HORIZON,

time_varying_known_reals=["time_idx"],

time_varying_unknown_reals=["target"],

time_varying_known_categoricals=["dateflag_day_number_in_week"],

static_categoricals=["segment"],

target_normalizer=GroupNormalizer(groups=["segment"]),

)

[40]:

model_tft = TFTModel(

dataset_builder=dataset_builder_tft,

trainer_params=dict(max_epochs=50, gradient_clip_val=0.1),

lr=0.01,

train_batch_size=32,

)

pipeline_tft = Pipeline(

model=model_tft,

horizon=HORIZON,

transforms=[transform_lag, transform_date],

)

[41]:

metrics_tft, forecast_tft, fold_info_tft = pipeline_tft.backtest(ts, metrics=metrics, n_folds=3, n_jobs=1)

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

----------------------------------------------------------------------------------------

0 | loss | QuantileLoss | 0

1 | logging_metrics | ModuleList | 0

2 | input_embeddings | MultiEmbedding | 47

3 | prescalers | ModuleDict | 96

4 | static_variable_selection | VariableSelectionNetwork | 1.8 K

5 | encoder_variable_selection | VariableSelectionNetwork | 1.9 K

6 | decoder_variable_selection | VariableSelectionNetwork | 1.3 K

7 | static_context_variable_selection | GatedResidualNetwork | 1.1 K

8 | static_context_initial_hidden_lstm | GatedResidualNetwork | 1.1 K

9 | static_context_initial_cell_lstm | GatedResidualNetwork | 1.1 K

10 | static_context_enrichment | GatedResidualNetwork | 1.1 K

11 | lstm_encoder | LSTM | 2.2 K

12 | lstm_decoder | LSTM | 2.2 K

13 | post_lstm_gate_encoder | GatedLinearUnit | 544

14 | post_lstm_add_norm_encoder | AddNorm | 32

15 | static_enrichment | GatedResidualNetwork | 1.4 K

16 | multihead_attn | InterpretableMultiHeadAttention | 676

17 | post_attn_gate_norm | GateAddNorm | 576

18 | pos_wise_ff | GatedResidualNetwork | 1.1 K

19 | pre_output_gate_norm | GateAddNorm | 576

20 | output_layer | Linear | 119

----------------------------------------------------------------------------------------

18.9 K Trainable params

0 Non-trainable params

18.9 K Total params

0.075 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=50` reached.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 59.3s remaining: 0.0s

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

----------------------------------------------------------------------------------------

0 | loss | QuantileLoss | 0

1 | logging_metrics | ModuleList | 0

2 | input_embeddings | MultiEmbedding | 47

3 | prescalers | ModuleDict | 96

4 | static_variable_selection | VariableSelectionNetwork | 1.8 K

5 | encoder_variable_selection | VariableSelectionNetwork | 1.9 K

6 | decoder_variable_selection | VariableSelectionNetwork | 1.3 K

7 | static_context_variable_selection | GatedResidualNetwork | 1.1 K

8 | static_context_initial_hidden_lstm | GatedResidualNetwork | 1.1 K

9 | static_context_initial_cell_lstm | GatedResidualNetwork | 1.1 K

10 | static_context_enrichment | GatedResidualNetwork | 1.1 K

11 | lstm_encoder | LSTM | 2.2 K

12 | lstm_decoder | LSTM | 2.2 K

13 | post_lstm_gate_encoder | GatedLinearUnit | 544

14 | post_lstm_add_norm_encoder | AddNorm | 32

15 | static_enrichment | GatedResidualNetwork | 1.4 K

16 | multihead_attn | InterpretableMultiHeadAttention | 676

17 | post_attn_gate_norm | GateAddNorm | 576

18 | pos_wise_ff | GatedResidualNetwork | 1.1 K

19 | pre_output_gate_norm | GateAddNorm | 576

20 | output_layer | Linear | 119

----------------------------------------------------------------------------------------

18.9 K Trainable params

0 Non-trainable params

18.9 K Total params

0.075 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=50` reached.

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 2.0min remaining: 0.0s

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

----------------------------------------------------------------------------------------

0 | loss | QuantileLoss | 0

1 | logging_metrics | ModuleList | 0

2 | input_embeddings | MultiEmbedding | 47

3 | prescalers | ModuleDict | 96

4 | static_variable_selection | VariableSelectionNetwork | 1.8 K

5 | encoder_variable_selection | VariableSelectionNetwork | 1.9 K

6 | decoder_variable_selection | VariableSelectionNetwork | 1.3 K

7 | static_context_variable_selection | GatedResidualNetwork | 1.1 K

8 | static_context_initial_hidden_lstm | GatedResidualNetwork | 1.1 K

9 | static_context_initial_cell_lstm | GatedResidualNetwork | 1.1 K

10 | static_context_enrichment | GatedResidualNetwork | 1.1 K

11 | lstm_encoder | LSTM | 2.2 K

12 | lstm_decoder | LSTM | 2.2 K

13 | post_lstm_gate_encoder | GatedLinearUnit | 544

14 | post_lstm_add_norm_encoder | AddNorm | 32

15 | static_enrichment | GatedResidualNetwork | 1.4 K

16 | multihead_attn | InterpretableMultiHeadAttention | 676

17 | post_attn_gate_norm | GateAddNorm | 576

18 | pos_wise_ff | GatedResidualNetwork | 1.1 K

19 | pre_output_gate_norm | GateAddNorm | 576

20 | output_layer | Linear | 119

----------------------------------------------------------------------------------------

18.9 K Trainable params

0 Non-trainable params

18.9 K Total params

0.075 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=50` reached.

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 3.0min remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 3.0min finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.2s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.2s finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s finished

[42]:

metrics_tft

[42]:

| segment | SMAPE | MAPE | MAE | fold_number | |

|---|---|---|---|---|---|

| 0 | segment_a | 6.219016 | 5.998432 | 31.660413 | 0 |

| 0 | segment_a | 6.268309 | 6.003822 | 32.816515 | 1 |

| 0 | segment_a | 9.697435 | 9.145774 | 51.779083 | 2 |

| 1 | segment_b | 7.691970 | 7.346405 | 19.040307 | 0 |

| 1 | segment_b | 5.268597 | 5.134976 | 12.925631 | 1 |

| 1 | segment_b | 4.920808 | 4.749094 | 11.951202 | 2 |

| 2 | segment_c | 4.135875 | 4.029833 | 7.123058 | 0 |

| 2 | segment_c | 2.613439 | 2.556872 | 4.862113 | 1 |

| 2 | segment_c | 5.812696 | 5.542748 | 10.524150 | 2 |

| 3 | segment_d | 6.789527 | 6.496418 | 60.241071 | 0 |

| 3 | segment_d | 3.483833 | 3.488098 | 28.402134 | 1 |

| 3 | segment_d | 4.614983 | 4.484013 | 38.315325 | 2 |

[43]:

score = metrics_tft["SMAPE"].mean()

print(f"Average SMAPE for TFT: {score:.3f}")

Average SMAPE for TFT: 5.626

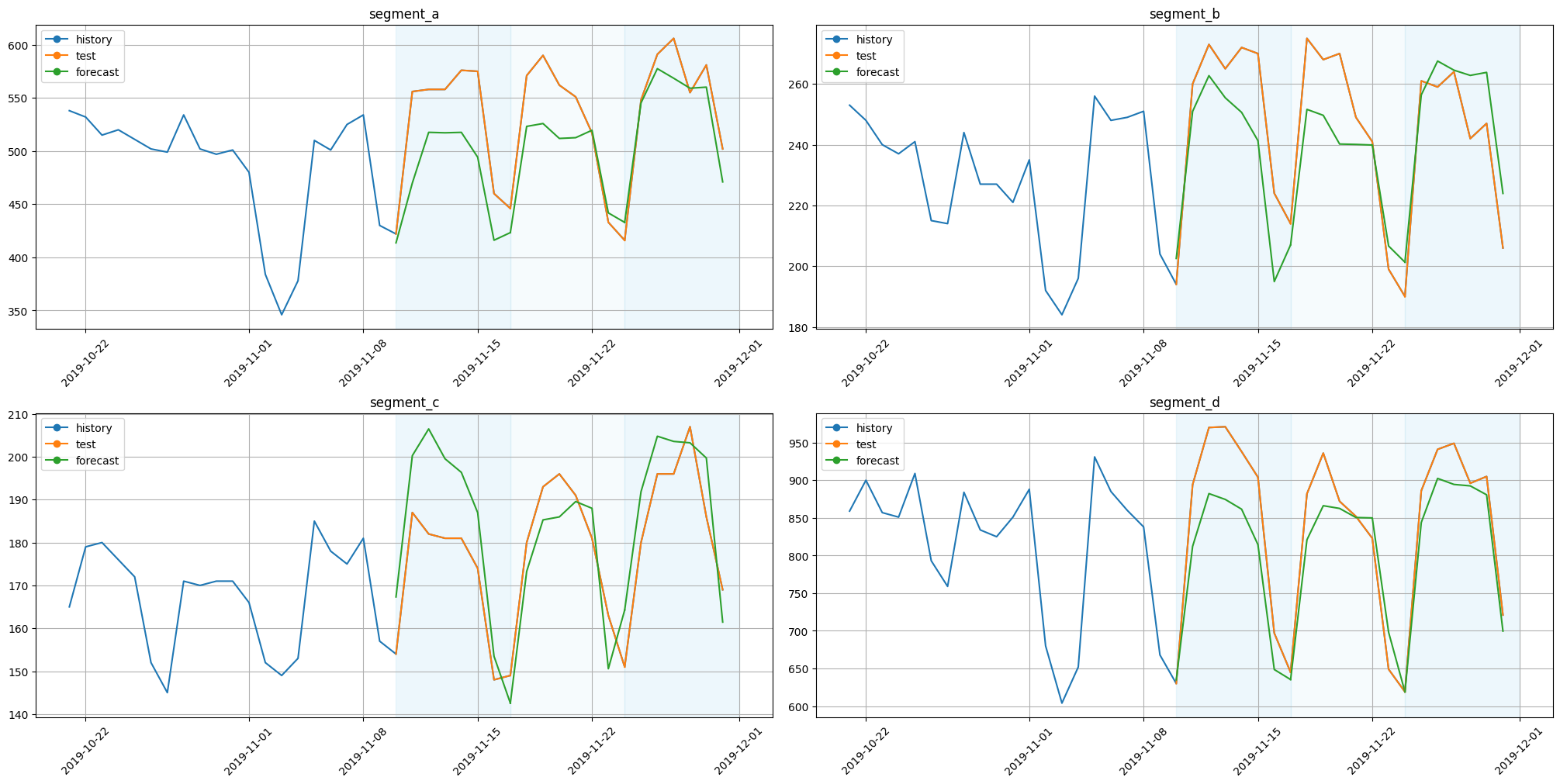

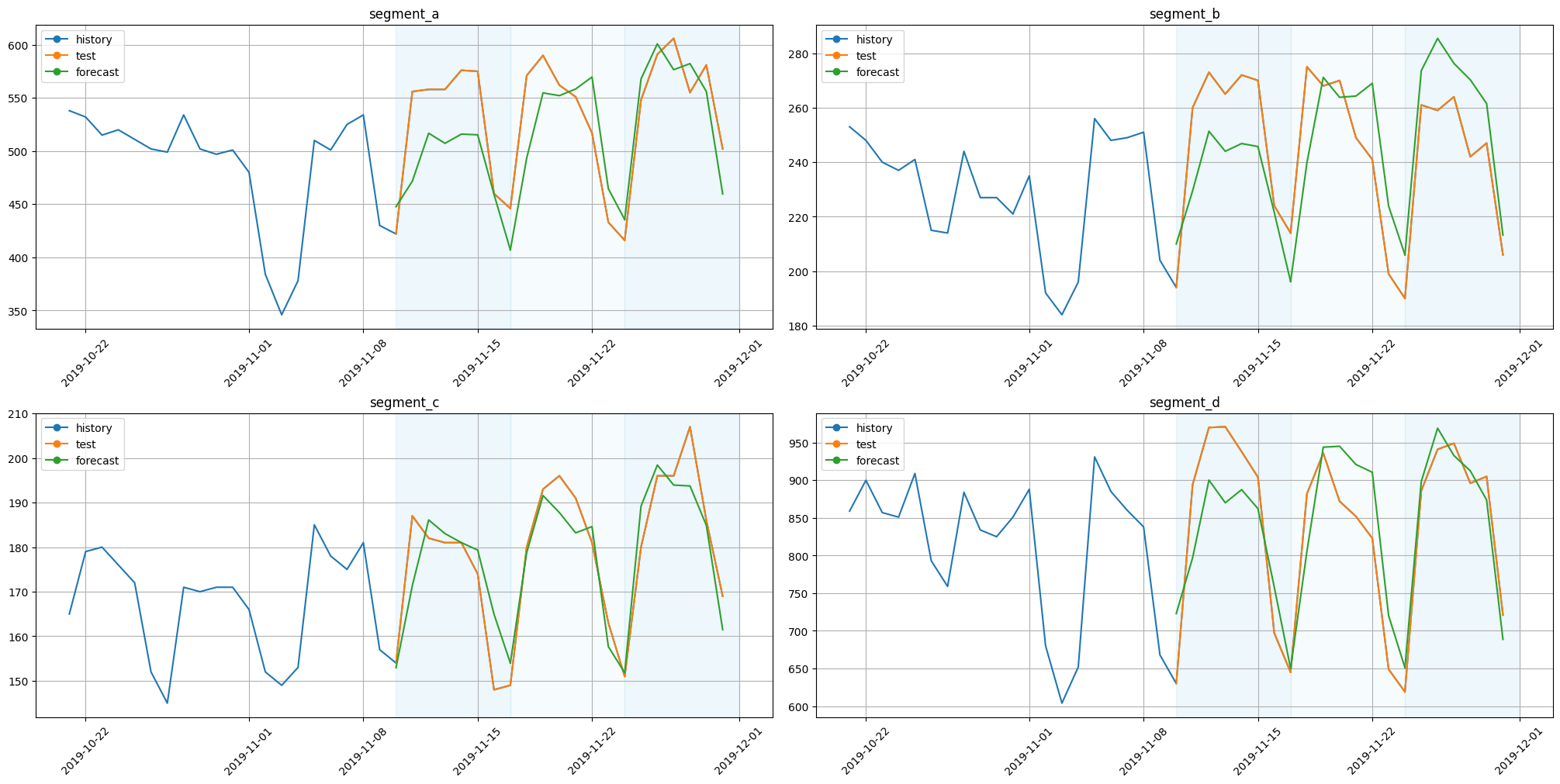

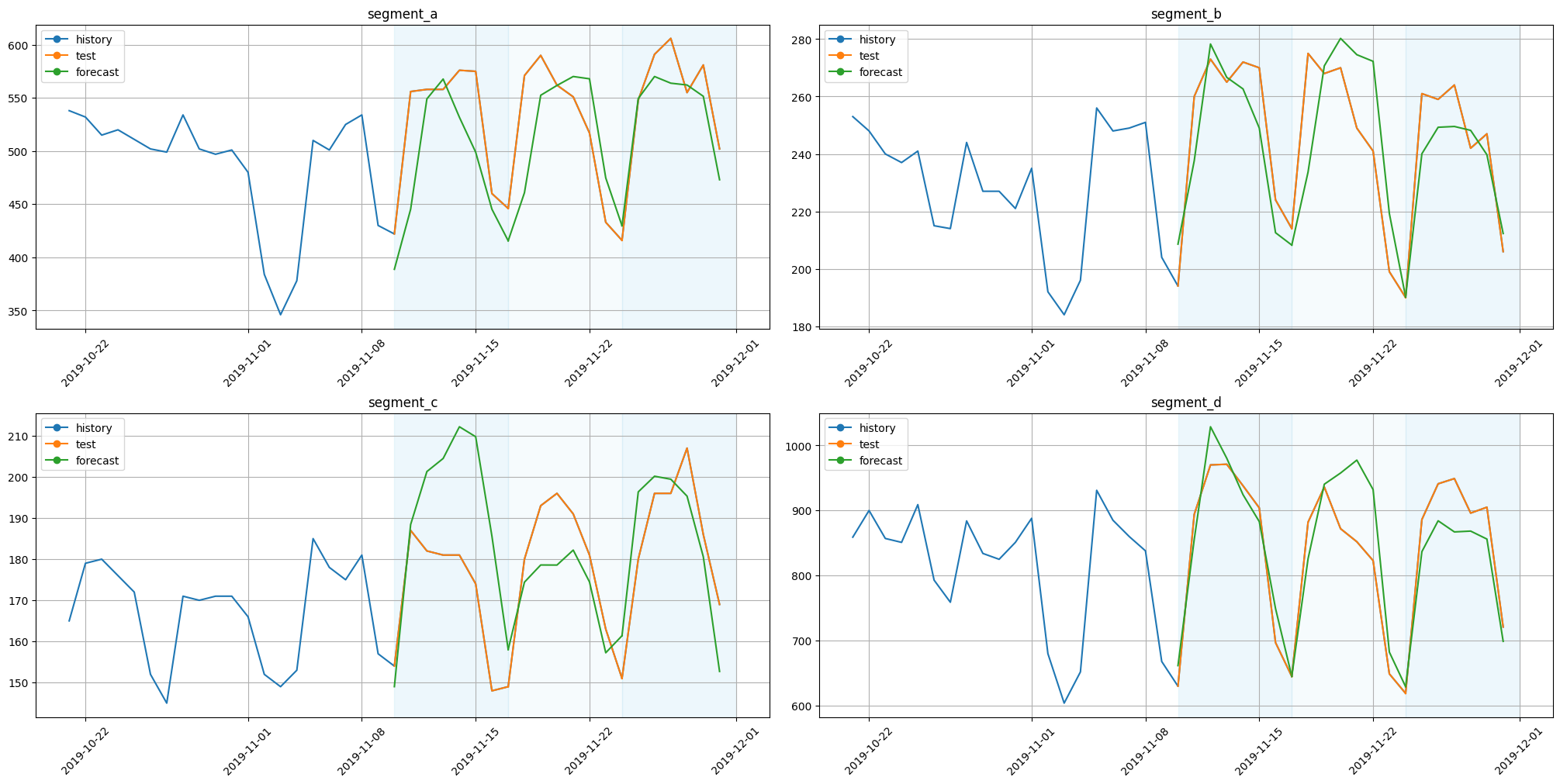

[44]:

plot_backtest(forecast_tft, ts, history_len=20)

3.5 TFTNative#

It is recommended to use our native implementation of TFT, we will remove Pytorch Forecasting version in etna 3.0.0.

[45]:

from etna.models.nn import TFTNativeModel

[46]:

num_lags = 7

lag_columns = [f"target_lag_{HORIZON+i}" for i in range(num_lags)]

transform_lag = LagTransform(

in_column="target",

lags=[HORIZON + i for i in range(num_lags)],

out_column="target_lag",

)

transform_date = DateFlagsTransform(

day_number_in_week=True,

day_number_in_month=False,

day_number_in_year=False,

week_number_in_month=False,

week_number_in_year=False,

month_number_in_year=False,

season_number=False,

year_number=False,

is_weekend=False,

out_column="dateflag",

)

scaler = StandardScalerTransform(in_column=["target"])

encoder = SegmentEncoderTransform()

label_encoder = LabelEncoderTransform(

in_column="dateflag_day_number_in_week", strategy="none", out_column="dateflag_day_number_in_week_label"

)

[47]:

set_seed()

model_tft_native = TFTNativeModel(

encoder_length=2 * HORIZON,

decoder_length=HORIZON,

static_categoricals=["segment_code"],

time_varying_categoricals_encoder=["dateflag_day_number_in_week_label"],

time_varying_categoricals_decoder=["dateflag_day_number_in_week_label"],

time_varying_reals_encoder=["target"] + lag_columns,

time_varying_reals_decoder=lag_columns,

num_embeddings={"segment_code": len(ts.segments), "dateflag_day_number_in_week_label": 7},

n_heads=1,

num_layers=2,

hidden_size=32,

lr=0.0001,

train_batch_size=16,

trainer_params=dict(max_epochs=5, gradient_clip_val=0.1),

)

pipeline_tft_native = Pipeline(

model=model_tft_native, horizon=HORIZON, transforms=[transform_lag, scaler, transform_date, encoder, label_encoder]

)

[48]:

metrics_tft_native, forecast_tft_native, fold_info_tft_native = pipeline_tft_native.backtest(

ts, metrics=metrics, n_folds=3, n_jobs=1

)

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

------------------------------------------------------------------------------

0 | loss | MSELoss | 0

1 | static_scalers | ModuleDict | 0

2 | static_embeddings | ModuleDict | 160

3 | time_varying_scalers_encoder | ModuleDict | 512

4 | time_varying_embeddings_encoder | ModuleDict | 256

5 | time_varying_scalers_decoder | ModuleDict | 448

6 | time_varying_embeddings_decoder | ModuleDict | 256

7 | static_variable_selection | VariableSelectionNetwork | 6.5 K

8 | encoder_variable_selection | VariableSelectionNetwork | 222 K

9 | decoder_variable_selection | VariableSelectionNetwork | 180 K

10 | static_covariate_encoder | StaticCovariateEncoder | 17.2 K

11 | lstm_encoder | LSTM | 16.9 K

12 | lstm_decoder | LSTM | 16.9 K

13 | gated_norm1 | GateAddNorm | 2.2 K

14 | temporal_fusion_decoder | TemporalFusionDecoder | 16.0 K

15 | gated_norm2 | GateAddNorm | 2.2 K

16 | output_fc | Linear | 33

------------------------------------------------------------------------------

481 K Trainable params

0 Non-trainable params

481 K Total params

1.927 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=5` reached.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 17.9s remaining: 0.0s

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

------------------------------------------------------------------------------

0 | loss | MSELoss | 0

1 | static_scalers | ModuleDict | 0

2 | static_embeddings | ModuleDict | 160

3 | time_varying_scalers_encoder | ModuleDict | 512

4 | time_varying_embeddings_encoder | ModuleDict | 256

5 | time_varying_scalers_decoder | ModuleDict | 448

6 | time_varying_embeddings_decoder | ModuleDict | 256

7 | static_variable_selection | VariableSelectionNetwork | 6.5 K

8 | encoder_variable_selection | VariableSelectionNetwork | 222 K

9 | decoder_variable_selection | VariableSelectionNetwork | 180 K

10 | static_covariate_encoder | StaticCovariateEncoder | 17.2 K

11 | lstm_encoder | LSTM | 16.9 K

12 | lstm_decoder | LSTM | 16.9 K

13 | gated_norm1 | GateAddNorm | 2.2 K

14 | temporal_fusion_decoder | TemporalFusionDecoder | 16.0 K

15 | gated_norm2 | GateAddNorm | 2.2 K

16 | output_fc | Linear | 33

------------------------------------------------------------------------------

481 K Trainable params

0 Non-trainable params

481 K Total params

1.927 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=5` reached.

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 36.2s remaining: 0.0s

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

------------------------------------------------------------------------------

0 | loss | MSELoss | 0

1 | static_scalers | ModuleDict | 0

2 | static_embeddings | ModuleDict | 160

3 | time_varying_scalers_encoder | ModuleDict | 512

4 | time_varying_embeddings_encoder | ModuleDict | 256

5 | time_varying_scalers_decoder | ModuleDict | 448

6 | time_varying_embeddings_decoder | ModuleDict | 256

7 | static_variable_selection | VariableSelectionNetwork | 6.5 K

8 | encoder_variable_selection | VariableSelectionNetwork | 222 K

9 | decoder_variable_selection | VariableSelectionNetwork | 180 K

10 | static_covariate_encoder | StaticCovariateEncoder | 17.2 K

11 | lstm_encoder | LSTM | 16.9 K

12 | lstm_decoder | LSTM | 16.9 K

13 | gated_norm1 | GateAddNorm | 2.2 K

14 | temporal_fusion_decoder | TemporalFusionDecoder | 16.0 K

15 | gated_norm2 | GateAddNorm | 2.2 K

16 | output_fc | Linear | 33

------------------------------------------------------------------------------

481 K Trainable params

0 Non-trainable params

481 K Total params

1.927 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=5` reached.

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 54.7s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 54.7s finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.2s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.2s finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s finished

[49]:

score = metrics_tft_native["SMAPE"].mean()

print(f"Average SMAPE for TFTNative: {score:.3f}")

Average SMAPE for TFTNative: 6.185

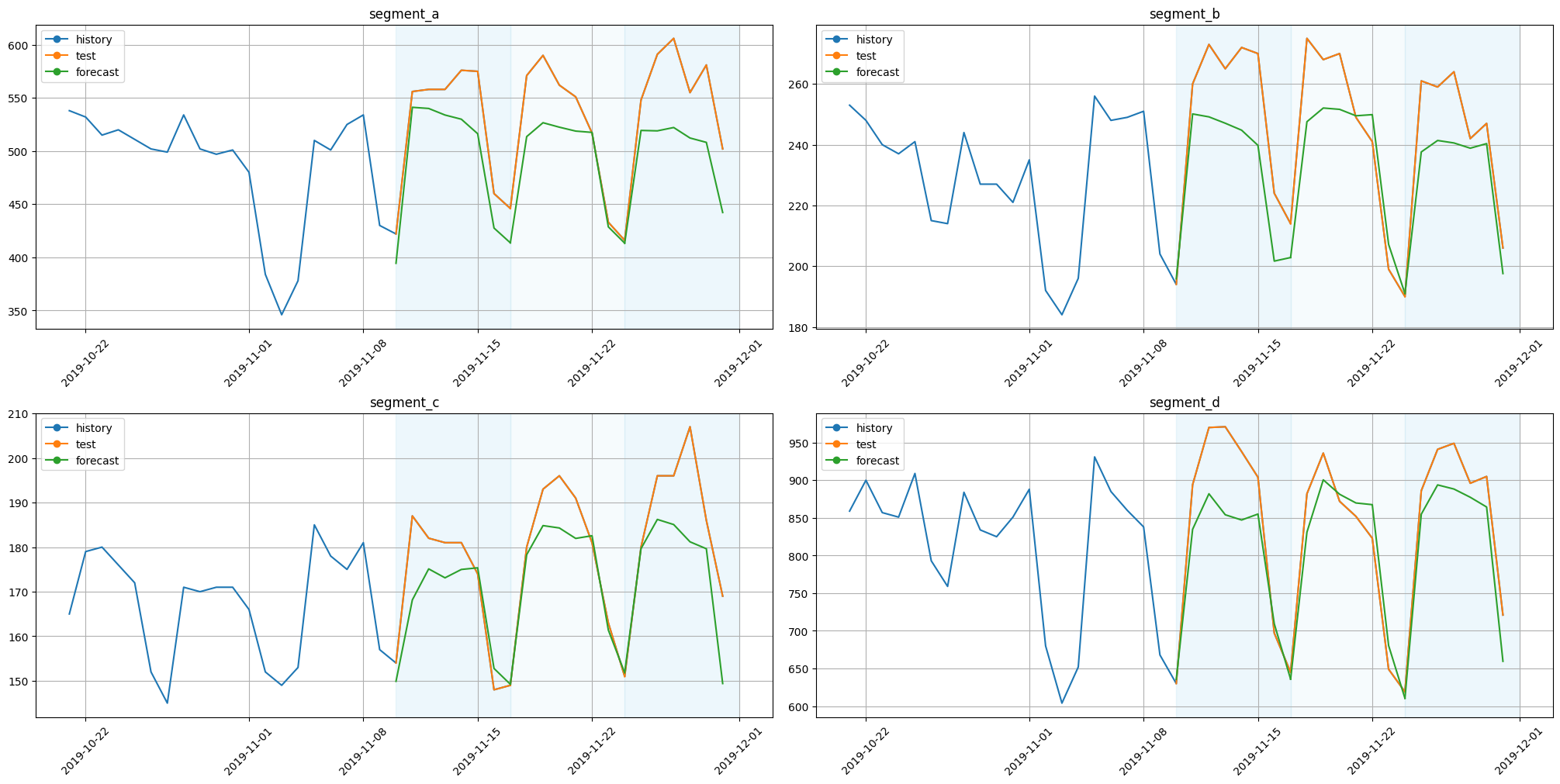

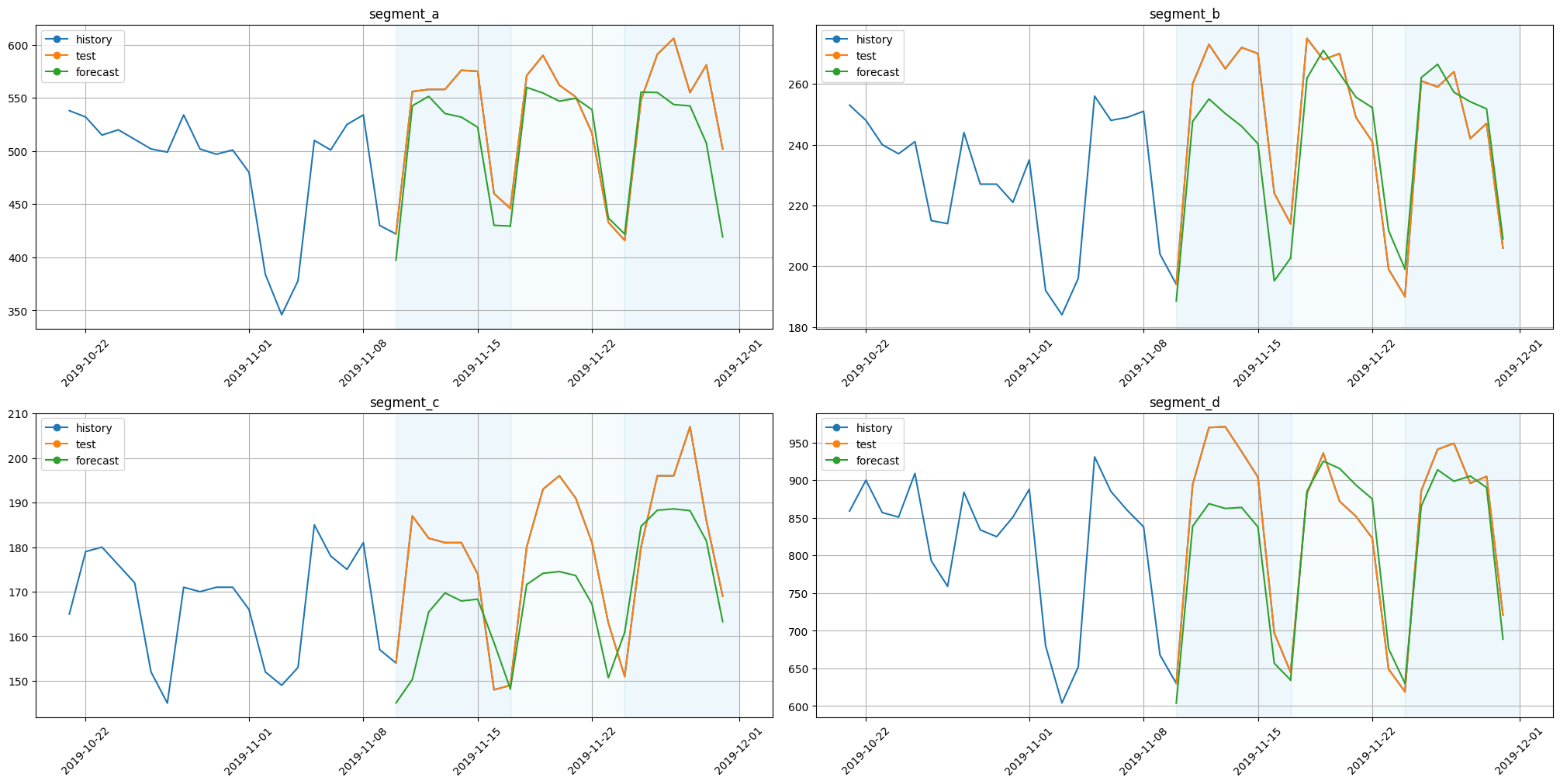

[50]:

plot_backtest(forecast_tft_native, ts, history_len=20)

3.6 RNN#

We’ll use RNN model based on LSTM cell

[51]:

from etna.models.nn import RNNModel

[52]:

num_lags = 7

scaler = StandardScalerTransform(in_column="target")

transform_lag = LagTransform(

in_column="target",

lags=[HORIZON + i for i in range(num_lags)],

out_column="target_lag",

)

transform_date = DateFlagsTransform(

day_number_in_week=True,

day_number_in_month=False,

day_number_in_year=False,

week_number_in_month=False,

week_number_in_year=False,

month_number_in_year=False,

season_number=False,

year_number=False,

is_weekend=False,

out_column="dateflag",

)

segment_encoder = SegmentEncoderTransform()

label_encoder = LabelEncoderTransform(

in_column="dateflag_day_number_in_week", strategy="none", out_column="dateflag_day_number_in_week_label"

)

embedding_sizes = {"dateflag_day_number_in_week_label": (7, 7)}

[53]:

set_seed()

model_rnn = RNNModel(

input_size=num_lags + 1,

encoder_length=2 * HORIZON,

decoder_length=HORIZON,

embedding_sizes=embedding_sizes,

trainer_params=dict(max_epochs=5),

lr=1e-3,

)

pipeline_rnn = Pipeline(

model=model_rnn,

horizon=HORIZON,

transforms=[scaler, transform_lag, transform_date, label_encoder],

)

[54]:

metrics_rnn, forecast_rnn, fold_info_rnn = pipeline_rnn.backtest(ts, metrics=metrics, n_folds=3, n_jobs=1)

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

----------------------------------------------

0 | loss | MSELoss | 0

1 | embedding | MultiEmbedding | 56

2 | rnn | LSTM | 4.3 K

3 | projection | Linear | 17

----------------------------------------------

4.4 K Trainable params

0 Non-trainable params

4.4 K Total params

0.017 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=5` reached.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 2.3s remaining: 0.0s

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

----------------------------------------------

0 | loss | MSELoss | 0

1 | embedding | MultiEmbedding | 56

2 | rnn | LSTM | 4.3 K

3 | projection | Linear | 17

----------------------------------------------

4.4 K Trainable params

0 Non-trainable params

4.4 K Total params

0.017 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=5` reached.

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 4.7s remaining: 0.0s

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

----------------------------------------------

0 | loss | MSELoss | 0

1 | embedding | MultiEmbedding | 56

2 | rnn | LSTM | 4.3 K

3 | projection | Linear | 17

----------------------------------------------

4.4 K Trainable params

0 Non-trainable params

4.4 K Total params

0.017 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=5` reached.

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 7.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 7.1s finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.2s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.2s finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s finished

[55]:

score = metrics_rnn["SMAPE"].mean()

print(f"Average SMAPE for LSTM: {score:.3f}")

Average SMAPE for LSTM: 5.653

[56]:

plot_backtest(forecast_rnn, ts, history_len=20)

3.7 MLP#

Base model with linear layers and activations.

[57]:

from etna.models.nn import MLPModel

[58]:

num_lags = 14

transform_lag = LagTransform(

in_column="target",

lags=[HORIZON + i for i in range(num_lags)],

out_column="target_lag",

)

transform_date = DateFlagsTransform(

day_number_in_week=True,

day_number_in_month=False,

day_number_in_year=False,

week_number_in_month=False,

week_number_in_year=False,

month_number_in_year=False,

season_number=False,

year_number=False,

is_weekend=False,

out_column="dateflag",

)

segment_encoder = SegmentEncoderTransform()

label_encoder = LabelEncoderTransform(

in_column="dateflag_day_number_in_week", strategy="none", out_column="dateflag_day_number_in_week_label"

)

embedding_sizes = {"dateflag_day_number_in_week_label": (7, 7)}

[59]:

set_seed()

model_mlp = MLPModel(

input_size=num_lags,

hidden_size=[16],

embedding_sizes=embedding_sizes,

decoder_length=HORIZON,

trainer_params=dict(max_epochs=50, gradient_clip_val=0.1),

lr=0.001,

train_batch_size=16,

)

metrics = [SMAPE(), MAPE(), MAE()]

pipeline_mlp = Pipeline(model=model_mlp, transforms=[transform_lag, transform_date, label_encoder], horizon=HORIZON)

[60]:

metrics_mlp, forecast_mlp, fold_info_mlp = pipeline_mlp.backtest(ts, metrics=metrics, n_folds=3, n_jobs=1)

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

---------------------------------------------

0 | loss | MSELoss | 0

1 | embedding | MultiEmbedding | 56

2 | mlp | Sequential | 369

---------------------------------------------

425 Trainable params

0 Non-trainable params

425 Total params

0.002 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=50` reached.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 1.7s remaining: 0.0s

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

---------------------------------------------

0 | loss | MSELoss | 0

1 | embedding | MultiEmbedding | 56

2 | mlp | Sequential | 369

---------------------------------------------

425 Trainable params

0 Non-trainable params

425 Total params

0.002 Total estimated model params size (MB)

IOPub message rate exceeded.

The notebook server will temporarily stop sending output

to the client in order to avoid crashing it.

To change this limit, set the config variable

`--NotebookApp.iopub_msg_rate_limit`.

Current values:

NotebookApp.iopub_msg_rate_limit=1000.0 (msgs/sec)

NotebookApp.rate_limit_window=3.0 (secs)

`Trainer.fit` stopped: `max_epochs=50` reached.

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 4.9s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 4.9s finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.2s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.2s finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s finished

[61]:

score = metrics_mlp["SMAPE"].mean()

print(f"Average SMAPE for MLP: {score:.3f}")

Average SMAPE for MLP: 5.929

[62]:

plot_backtest(forecast_mlp, ts, history_len=20)

3.8 Deep State Model#

Deep State Model works well with multiple similar time-series. It inffers shared patterns from them.

We have to determine the type of seasonality in data (based on data granularity), SeasonalitySSM class is responsible for this. In this example, we have daily data, so we use day-of-week (7 seasons) and day-of-month (31 seasons) models. We also set the trend component using the LevelTrendSSM class. Also in the model we use time-based features like day-of-week, day-of-month and time independent feature representing the segment of time series.

[63]:

from etna.models.nn import DeepStateModel

from etna.models.nn.deepstate import CompositeSSM

from etna.models.nn.deepstate import LevelTrendSSM

from etna.models.nn.deepstate import SeasonalitySSM

[64]:

from etna.transforms import FilterFeaturesTransform

[65]:

num_lags = 7

transforms = [

SegmentEncoderTransform(),

StandardScalerTransform(in_column="target"),

DateFlagsTransform(

day_number_in_week=True,

day_number_in_month=True,

day_number_in_year=False,

week_number_in_month=False,

week_number_in_year=False,

month_number_in_year=False,

season_number=False,

year_number=False,

is_weekend=False,

out_column="dateflag",

),

LagTransform(

in_column="target",

lags=[HORIZON + i for i in range(num_lags)],

out_column="target_lag",

),

LabelEncoderTransform(

in_column="dateflag_day_number_in_week", strategy="none", out_column="dateflag_day_number_in_week_label"

),

LabelEncoderTransform(

in_column="dateflag_day_number_in_month", strategy="none", out_column="dateflag_day_number_in_month_label"

),

FilterFeaturesTransform(exclude=["dateflag_day_number_in_week", "dateflag_day_number_in_month"]),

]

embedding_sizes = {

"dateflag_day_number_in_week_label": (7, 7),

"dateflag_day_number_in_month_label": (31, 7),

"segment_code": (4, 7),

}

[66]:

monthly_smm = SeasonalitySSM(num_seasons=31, timestamp_transform=lambda x: x.day - 1)

weekly_smm = SeasonalitySSM(num_seasons=7, timestamp_transform=lambda x: x.weekday())

[67]:

set_seed()

model_dsm = DeepStateModel(

ssm=CompositeSSM(seasonal_ssms=[weekly_smm, monthly_smm], nonseasonal_ssm=LevelTrendSSM()),

input_size=num_lags,

encoder_length=2 * HORIZON,

decoder_length=HORIZON,

embedding_sizes=embedding_sizes,

trainer_params=dict(max_epochs=5),

lr=1e-3,

)

pipeline_dsm = Pipeline(

model=model_dsm,

horizon=HORIZON,

transforms=transforms,

)

[68]:

metrics_dsm, forecast_dsm, fold_info_dsm = pipeline_dsm.backtest(ts, metrics=metrics, n_folds=3, n_jobs=1)

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

----------------------------------------------

0 | embedding | MultiEmbedding | 315

1 | RNN | LSTM | 11.2 K

2 | projectors | ModuleDict | 5.0 K

----------------------------------------------

16.5 K Trainable params

0 Non-trainable params

16.5 K Total params

0.066 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=5` reached.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 8.7s remaining: 0.0s

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

----------------------------------------------

0 | embedding | MultiEmbedding | 315

1 | RNN | LSTM | 11.2 K

2 | projectors | ModuleDict | 5.0 K

----------------------------------------------

16.5 K Trainable params

0 Non-trainable params

16.5 K Total params

0.066 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=5` reached.

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 17.8s remaining: 0.0s

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

----------------------------------------------

0 | embedding | MultiEmbedding | 315

1 | RNN | LSTM | 11.2 K

2 | projectors | ModuleDict | 5.0 K

----------------------------------------------

16.5 K Trainable params

0 Non-trainable params

16.5 K Total params

0.066 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=5` reached.

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 27.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 27.0s finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.2s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.3s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.3s finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s finished

[69]:

score = metrics_dsm["SMAPE"].mean()

print(f"Average SMAPE for DeepStateModel: {score:.3f}")

Average SMAPE for DeepStateModel: 5.520

[70]:

plot_backtest(forecast_dsm, ts, history_len=20)

3.9 N-BEATS Model#

This architecture is based on backward and forward residual links and a deep stack of fully connected layers.

There are two types of models in the library. The NBeatsGenericModel class implements a generic deep learning model, while the NBeatsInterpretableModel is augmented with certain inductive biases to be interpretable (trend and seasonality).

[71]:

from etna.models.nn import NBeatsGenericModel

from etna.models.nn import NBeatsInterpretableModel

[72]:

set_seed()

model_nbeats_generic = NBeatsGenericModel(

input_size=2 * HORIZON,

output_size=HORIZON,

loss="smape",

stacks=30,

layers=4,

layer_size=256,

trainer_params=dict(max_epochs=1000),

lr=1e-3,

)

pipeline_nbeats_generic = Pipeline(

model=model_nbeats_generic,

horizon=HORIZON,

transforms=[],

)

[73]:

metrics_nbeats_generic, forecast_nbeats_generic, _ = pipeline_nbeats_generic.backtest(

ts, metrics=metrics, n_folds=3, n_jobs=1

)

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

--------------------------------------

0 | model | NBeats | 206 K

1 | loss | NBeatsSMAPE | 0

--------------------------------------

206 K Trainable params

0 Non-trainable params

206 K Total params

0.826 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=1000` reached.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 35.5s remaining: 0.0s

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

--------------------------------------

0 | model | NBeats | 206 K

1 | loss | NBeatsSMAPE | 0

--------------------------------------

206 K Trainable params

0 Non-trainable params

206 K Total params

0.826 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=1000` reached.

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 1.2min remaining: 0.0s

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

--------------------------------------

0 | model | NBeats | 206 K

1 | loss | NBeatsSMAPE | 0

--------------------------------------

206 K Trainable params

0 Non-trainable params

206 K Total params

0.826 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=1000` reached.

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 1.8min remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 1.8min finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s finished

[74]:

score = metrics_nbeats_generic["SMAPE"].mean()

print(f"Average SMAPE for N-BEATS Generic: {score:.3f}")

Average SMAPE for N-BEATS Generic: 6.027

[75]:

plot_backtest(forecast_nbeats_generic, ts, history_len=20)

[76]:

model_nbeats_interp = NBeatsInterpretableModel(

input_size=4 * HORIZON,

output_size=HORIZON,

loss="smape",

trend_layer_size=64,

seasonality_layer_size=256,

trainer_params=dict(max_epochs=2000),

lr=1e-3,

)

pipeline_nbeats_interp = Pipeline(

model=model_nbeats_interp,

horizon=HORIZON,

transforms=[],

)

[77]:

metrics_nbeats_interp, forecast_nbeats_interp, _ = pipeline_nbeats_interp.backtest(

ts, metrics=metrics, n_folds=3, n_jobs=1

)

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

--------------------------------------

0 | model | NBeats | 224 K

1 | loss | NBeatsSMAPE | 0

--------------------------------------

223 K Trainable params

385 Non-trainable params

224 K Total params

0.896 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=2000` reached.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 32.4s remaining: 0.0s

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

--------------------------------------

0 | model | NBeats | 224 K

1 | loss | NBeatsSMAPE | 0

--------------------------------------

223 K Trainable params

385 Non-trainable params

224 K Total params

0.896 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=2000` reached.

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 1.1min remaining: 0.0s

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

--------------------------------------

0 | model | NBeats | 224 K

1 | loss | NBeatsSMAPE | 0

--------------------------------------

223 K Trainable params

385 Non-trainable params

224 K Total params

0.896 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=2000` reached.

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 1.7min remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 1.7min finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.0s finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s finished

[78]:

score = metrics_nbeats_interp["SMAPE"].mean()

print(f"Average SMAPE for N-BEATS Interpretable: {score:.3f}")

Average SMAPE for N-BEATS Interpretable: 5.656

[79]:

plot_backtest(forecast_nbeats_interp, ts, history_len=20)

3.10 PatchTS Model#

Model with transformer encoder that uses patches of timeseries as input words and linear decoder.

[80]:

from etna.models.nn import PatchTSModel

[81]:

set_seed()

model_patchts = PatchTSModel(

decoder_length=HORIZON,

encoder_length=2 * HORIZON,

patch_len=1,

trainer_params=dict(max_epochs=30),

lr=1e-3,

train_batch_size=64,

)

pipeline_patchts = Pipeline(

model=model_patchts, horizon=HORIZON, transforms=[StandardScalerTransform(in_column="target")]

)

metrics_patchts, forecast_patchts, fold_info_patchs = pipeline_patchts.backtest(

ts, metrics=metrics, n_folds=3, n_jobs=1

)

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

------------------------------------------

0 | loss | MSELoss | 0

1 | model | Sequential | 397 K

2 | projection | Sequential | 1.8 K

------------------------------------------

399 K Trainable params

0 Non-trainable params

399 K Total params

1.598 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=30` reached.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 4.4min remaining: 0.0s

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

------------------------------------------

0 | loss | MSELoss | 0

1 | model | Sequential | 397 K

2 | projection | Sequential | 1.8 K

------------------------------------------

399 K Trainable params

0 Non-trainable params

399 K Total params

1.598 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=30` reached.

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 8.9min remaining: 0.0s

GPU available: False, used: False

TPU available: False, using: 0 TPU cores

IPU available: False, using: 0 IPUs

HPU available: False, using: 0 HPUs

| Name | Type | Params

------------------------------------------

0 | loss | MSELoss | 0

1 | model | Sequential | 397 K

2 | projection | Sequential | 1.8 K

------------------------------------------

399 K Trainable params

0 Non-trainable params

399 K Total params

1.598 Total estimated model params size (MB)

`Trainer.fit` stopped: `max_epochs=30` reached.

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 13.5min remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 13.5min finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s finished

[82]:

score = metrics_patchts["SMAPE"].mean()

print(f"Average SMAPE for PatchTS: {score:.3f}")

Average SMAPE for PatchTS: 6.295

[83]:

plot_backtest(forecast_patchts, ts, history_len=20)

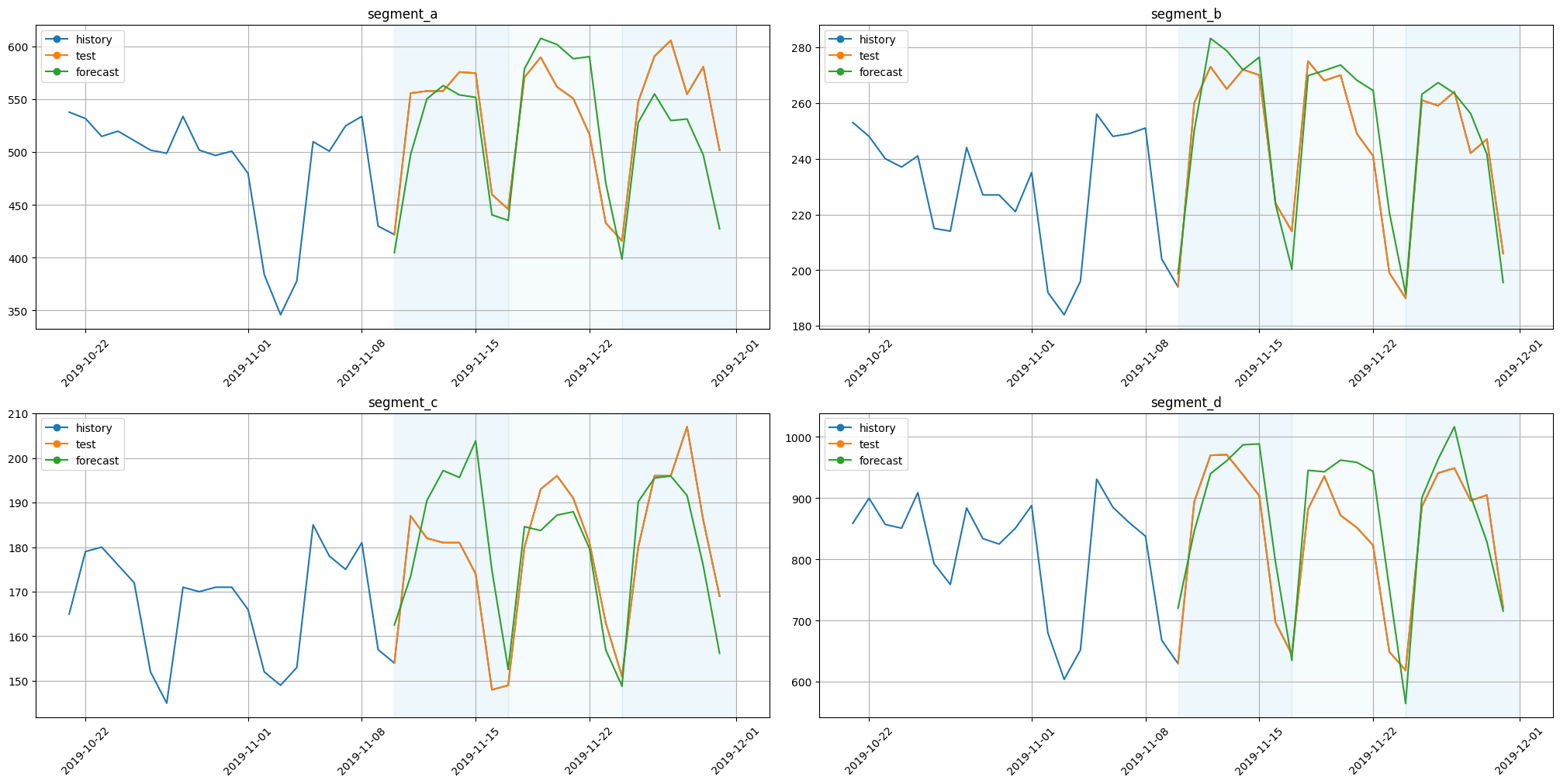

3.11 Chronos Model#

Chronos is pretrained model for zero-shot forecasting.

[84]:

from etna.models.nn import ChronosModel

To get list of available models use list_models.

[85]:

ChronosModel.list_models()

[85]:

['amazon/chronos-t5-tiny',

'amazon/chronos-t5-mini',

'amazon/chronos-t5-small',

'amazon/chronos-t5-base',

'amazon/chronos-t5-large']

Let’s try the smallest model.

[86]:

set_seed()

model_chronos = ChronosModel(path_or_url="amazon/chronos-t5-tiny", encoder_length=2 * HORIZON, num_samples=10)

pipeline_chronos = Pipeline(model=model_chronos, horizon=HORIZON, transforms=[])

metrics_chronos, forecast_chronos, fold_info_chronos = pipeline_chronos.backtest(

ts, metrics=metrics, n_folds=3, n_jobs=1

)

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.2s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.3s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.3s finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.0s finished

[87]:

score = metrics_chronos["SMAPE"].mean()

print(f"Average SMAPE for Chronos tiny: {score:.3f}")

Average SMAPE for Chronos tiny: 12.999

Not good. Let’s try to set encoder_length equals the available history of dataset. As available history length for each fold is different, so you can set encoder_length equals to length of the initial dataset - model will get all available history as a context.

[88]:

dataset_length = ts.size()[0]

[89]:

set_seed()

model_chronos = ChronosModel(path_or_url="amazon/chronos-t5-tiny", encoder_length=dataset_length, num_samples=10)

pipeline_chronos = Pipeline(model=model_chronos, horizon=HORIZON, transforms=[])

metrics_chronos, forecast_chronos, fold_info_chronos = pipeline_chronos.backtest(

ts, metrics=metrics, n_folds=3, n_jobs=1

)

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.1s finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.2s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.4s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.6s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.6s finished

[Parallel(n_jobs=1)]: Using backend SequentialBackend with 1 concurrent workers.

[Parallel(n_jobs=1)]: Done 1 out of 1 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 2 out of 2 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.0s remaining: 0.0s

[Parallel(n_jobs=1)]: Done 3 out of 3 | elapsed: 0.0s finished

[90]:

score = metrics_chronos["SMAPE"].mean()

print(f"Average SMAPE for Chronos tiny with long context: {score:.3f}")

Average SMAPE for Chronos tiny with long context: 7.094

Better. Let’s get more complex model.

[91]: